Heterogeneous Federated Learning Streamlined Through Model Compression

In the realm of machine learning, data scarcity can be a significant hurdle when private datasets are limited. A novel solution to this problem is the use of transfer learning, which leverages large public datasets when private datasets are scarce. This approach is at the heart of the FedMD framework, a groundbreaking development in the field of federated learning.

The FedMD framework, detailed in the paper "[3] Daliang Li, Junpu Wang. 'FedMD: Heterogenous Federated Learning via Model Distillation'," addresses the challenge of data and device heterogeneity, a common issue in federated learning where a user may have rich data but is unable to customize the global model.

The framework, developed by Tian Li, Anit Kumar Sahu, Ameet Talwalkar, and Virginia Smith, allows each participant to have a unique, independently, and privately designed model. This is a significant departure from traditional federated learning, where participants typically use a globally shared model.

In the FedMD framework, each participant computes class scores via a process known as knowledge distillation, which is also referred to as the translator. This approach allows the training of any agnostic model to leverage knowledge from one model into another.

The paper focuses on a different type of heterogeneity: differences in local models. The authors conduct experiments on 10 participants, each with unique convolution networks that differ by the number of channels and layers.

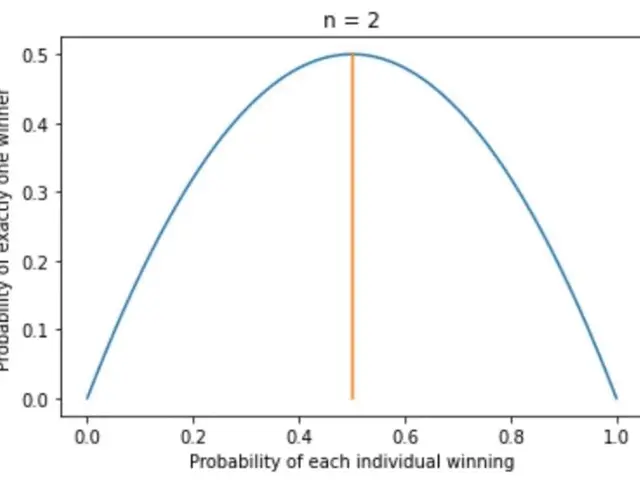

Initially, these participants are trained on a public dataset and achieve state-of-the-art accuracy on popular datasets like MNIST and CIFAR10. After training on their own small private dataset, the curve slowly approaches the optimal test accuracy of the FedMD framework, as shown in Fig 4.

The FedMD framework relaxes the statistical and model heterogeneity challenges in federated learning by using transfer learning and knowledge distillation. Unlike vanilla federated learning, where the global model is sent to each participant before training and updated with the average of all local gradients after each round, the FedMD framework operates differently.

In the FedMD framework, each participant trains a unique model on a public dataset to convergence, trains its own small private dataset using the unique model, computes class scores on the public dataset, and sends the results to a central server. The central server updates the consensus (average of class scores) in the FedMD framework, which becomes the new public dataset for further federated training and fine-tuning.

This approach allows participants to craft their own model to meet its distinct specification in federated learning. The translator communicates to the central server, known as the consensus, and the consensus performs an update of the consensus with the average of the class scores computed from each participant.

In conclusion, the FedMD framework represents a significant step forward in the field of federated learning, offering a solution to the challenges of data and device heterogeneity by leveraging transfer learning and knowledge distillation.

Read also:

- Peptide YY (PYY): Exploring its Role in Appetite Suppression, Intestinal Health, and Cognitive Links

- Aspergillosis: Recognizing Symptoms, Treatment Methods, and Knowing When Medical Attention is Required

- Nighttime Gas Issues Explained (and Solutions Provided)

- Home Remedies, Advice, and Prevention Strategies for Addressing Acute Gastroenteritis at Home